- Home

- Continuous Improvement Certification Online

- How to Build Tqm-baseline

How to build a TQM baseline (defects, COPQ, Cpk, NPS) before improvements

If you can't clearly describe "where we started," you can't credibly claim "we improved." A solid TQM baseline is your before-picture: the reference point you'll use to evaluate progress, validate results, and separate real improvement from noise.

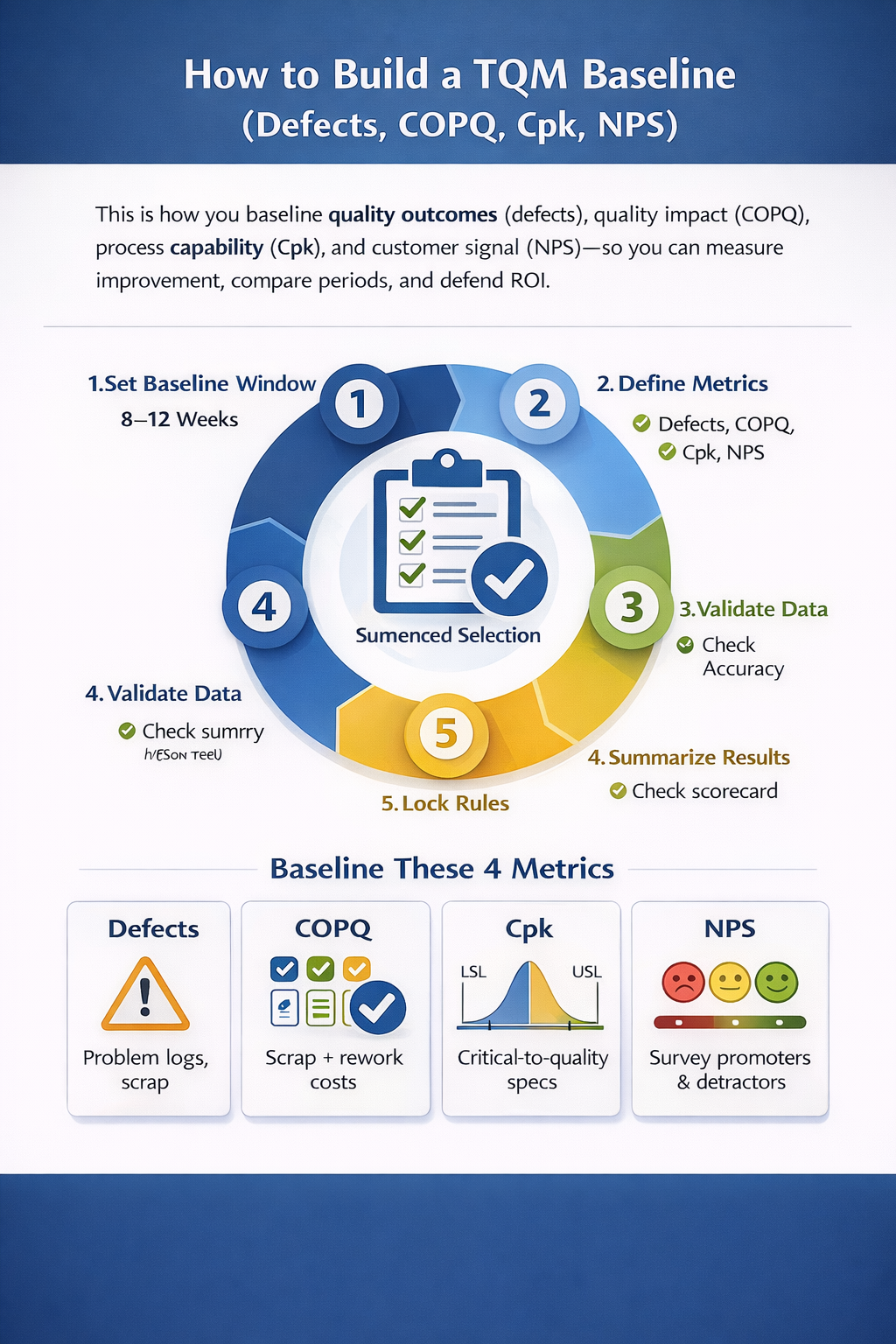

This page shows a practical, step-by-step way to build a baseline using four high-leverage measures:

· Defects (quality outcomes, speed, reliability)

· COPQ (financial impact of poor quality)

· Cpk (process capability vs. specifications)

· NPS (customer experience signal)

You'll leave with a baseline method you can defend in an audit, a leadership review, or a customer escalation.

A TQM baseline is a time-bound "starting point" for quality, cost, capability, and customer experience. Build it by (1) selecting a stable baseline window (typically 8-12 weeks), (2) defining metric rules for defects (what counts, where logged, unit of measure), COPQ (scrap, rework, warranty, returns, concessions, expediting), Cpk (CTQs, specs, sampling plan, measurement system), and NPS (who you survey, when, and how you score). (3) Validate data integrity with clear definitions and ownership, then (4) summarize results in one scorecard that shows the baseline mean, variation, and key drivers. Finally, (5) lock the baseline rules so future results use the same definitions, enabling apples-to-apples comparison and credible evaluation of TQM impact.

Why a Baseline Fails (and How to Prevent It)

Most baselines fail for one of these reasons:

1. Moving definitions: A "defect" changes depending on who reports it.

2. Mixed scopes: You baseline Plant A but claim savings for A+B+C.

3. Bad measurement: Instruments or appraisals are inconsistent (garbage in, garbage out).

4. No variance view: You report only averages and miss instability.

5. Customer metric mismatch: NPS is collected from the wrong segment or at the wrong moment.

Your goal isn't a perfect baseline. Your goal is a baseline that is repeatable, comparable, and trusted.

For a fuller view of how TQM is evaluated over time, see Evaluation of Total Quality Management.

Step-by-Step: Build the Baseline in 10

Moves

1) Define the baseline purpose (one sentence)

Examples:

· "Establish current-state quality and cost to evaluate TQM improvements quarterly."

· "Create a defensible pre-TQM reference for leadership and finance."

2) Freeze the scope

Write down:

· Product family / service line

· Sites / lines / teams included

· Start and end dates

· Included customers (segments, regions)

Rule: If it is not in scope, you do not claim it later.

3) Choose a baseline window that reflects "normal"

Typical options:

· 8-12 weeks for operational metrics (defects, COPQ drivers)

· 3-6 months if demand is seasonal or low volume

· Avoid known abnormal periods (major launches, strikes, one-time disruptions)

4) Define metric operational definitions (non-negotiable)

For each metric, document:

· What counts / what does not

· Unit of measure

· Data source

· Frequency

· Owner

· Calculation method

· Known limitations

This is the difference between measurement and opinions.

5) Validate your measurement system (quick reality check)

Before you baseline Cpk (and sometimes defect counts), confirm the measurement system is credible:

· Are gauges calibrated?

· Are inspectors aligned on pass/fail?

· Are data fields consistently filled?

If measurement is unstable, your baseline becomes a baseline for confusion.

6) Collect baseline data (with checks)

Use simple checks:

· Missing values trend (are we "forgetting" to log defects?)

· Outlier review (big spikes explained?)

· Cross-check totals against production/shipments

7) Separate level from variation

Baseline needs both:

· Average (typical performance)

· Variation (how predictable it is)

In TQM, stability often improves before the mean improves.

8) Identify top drivers (Pareto)

For defects and COPQ, capture:

· Top defect types

· Top process steps causing issues

· Top cost categories

· Top customers/regions driving NPS pain

9) Build a one-page baseline scorecard

One page, leadership-ready:

· Defects (rate + top contributors)

· COPQ (total + category breakdown)

· Cpk (CTQs + worst capability)

· NPS (score + themes)

10) Lock baseline rules (change control)

If you later refine definitions, do it transparently:

· Keep the original baseline as measured

· Start a new definition series going forward

· Never rewrite history without documenting why

Metric Playbook: Defects (Build It the Right Way)

Pick the defect measure that matches your operation

Common choices:

· Defects per Unit (DPU) = total defects / total units

· Defects per Million Opportunities (DPMO) = (defects / opportunities) x 1,000,000

· First Pass Yield (FPY) = good units first time / total units

· Rolled Throughput Yield (RTY) for multi-step processes

Tip: If leadership is new to quality metrics, start with FPY + a defect rate. Make it easy to understand.

Define "defect" in writing

Clarify:

· Is a cosmetic issue a defect?

· Does rework count as defect, or only escapes?

· Do customer complaints count the same as internal scrap?

Recommended defect baseline output

Include:

· Defect rate trend by week

· Pareto of defect types

· Pareto by process step / line / team

· A short list of defect rules (your operational definition)

Metric Playbook: COPQ (Cost of Poor Quality)

COPQ translates quality into financial language. For baselining, keep it practical and auditable.

COPQ categories to baseline

· Internal failure: scrap, rework labor, re-inspection, retest, downtime tied to defects

· External failure: warranty, returns, credits, concessions, complaint handling, field service

· Extraordinary costs: expediting, premium freight, containment, sorting, third-party inspection

COPQ rules that prevent "fantasy savings"

· Use actuals when possible (GL accounts, warranty claims, credit memos)

· If you must estimate, document assumptions:

· Labor rate used

· Standard time per rework

· Scrap valuation method

· Do not double count (for example, rework labor + downtime for the same event)

Recommended COPQ baseline output

· Total COPQ per week/month

· COPQ as % of sales (if relevant)

· Breakdown by category (scrap, rework, warranty/returns, expediting)

· Top 5 cost drivers with short explanations

BLOCK 6 - Metric Playbook: COPQ (Cost of Poor Quality)

COPQ translates quality into financial language. For baselining, keep it practical and auditable.

COPQ categories to baseline

· Internal failure: scrap, rework labor, re-inspection, retest, downtime tied to defects

· External failure: warranty, returns, credits, concessions, complaint handling, field service

· Extraordinary costs: expediting, premium freight, containment, sorting, third-party inspection

COPQ rules that prevent "fantasy savings"

· Use actuals when possible (GL accounts, warranty claims, credit memos)

· If you must estimate, document assumptions:

· Labor rate used

· Standard time per rework

· Scrap valuation method

· Do not double count (for example, rework labor + downtime for the same event)

Recommended COPQ baseline output

· Total COPQ per week/month

· COPQ as % of sales (if relevant)

· Breakdown by category (scrap, rework, warranty/returns, expediting)

· Top 5 cost drivers with short explanations

BLOCK 6 - Metric Playbook: COPQ (Cost of Poor Quality)

COPQ translates quality into financial language. For baselining, keep it practical and auditable.

COPQ categories to baseline

· Internal failure: scrap, rework labor, re-inspection, retest, downtime tied to defects

· External failure: warranty, returns, credits, concessions, complaint handling, field service

· Extraordinary costs: expediting, premium freight, containment, sorting, third-party inspection

COPQ rules that prevent "fantasy savings"

· Use actuals when possible (GL accounts, warranty claims, credit memos)

· If you must estimate, document assumptions:

· Labor rate used

· Standard time per rework

· Scrap valuation method

· Do not double count (for example, rework labor + downtime for the same event)

Recommended COPQ baseline output

· Total COPQ per week/month

· COPQ as % of sales (if relevant)

· Breakdown by category (scrap, rework, warranty/returns, expediting)

· Top 5 cost drivers with short explanations

Metric Playbook: Cpk (Process Capability)

Cpk answers: "Can this process consistently meet spec?"

Choose the right CTQs

CTQs (Critical-to-Quality characteristics) should be:

· Customer-relevant

· Measurable

· Specified (USL/LSL exist)

· Tied to the value stream you are improving

Baseline Cpk correctly

Minimum checklist:

· Confirm spec limits (LSL/USL) are current and agreed

· Define sampling plan:

· What is one sample?

· How many per shift/day/week?

· At what process step?

· Verify measurement system quality (calibration, repeatability concerns)

· Ensure you are not mixing different products/specs in one Cpk

Recommended Cpk baseline output

· Table of CTQs with: LSL, USL, mean, sigma, Cpk, sample size, date range

· Highlight the worst 3 CTQs (lowest Cpk)

· Flag CTQs with evidence of instability (special-cause behavior)

Practical note: If the process is unstable, Cpk becomes less meaningful. In that case, your baseline should emphasize stability first, then capability.

Metric Playbook: NPS (Net Promoter Score)

NPS is not "the voice of the customer." It is one signal. Baseline it carefully so it does not mislead you.

Baseline NPS with clean rules

Define:

· Who receives the survey (which customers / segments)

· Trigger timing (post-delivery, post-support, quarterly relationship survey)

· Channel (email, SMS, in-product)

· Language and consistency across regions

· Minimum response threshold (do not report NPS from tiny samples as truth)

Include the "why," not just the score

Baseline should capture:

· NPS score and response count

· Top themes from comments (delivery, defects, support response time, billing issues)

· Segment view (key accounts vs. long tail)

Recommended NPS baseline output

· NPS trend by month/quarter

· Themes with representative paraphrases (not cherry-picked)

· One action-oriented summary: "What is driving detractors right now?"

The Baseline Scorecard Template (One Page)

Use a single scorecard to make your baseline usable:

A) Quality Outcomes (Defects)

· Defect rate (primary)

· FPY/RTY (if applicable)

· Top 3 defect categories

B) Financial Impact (COPQ)

· Total COPQ

· COPQ breakdown (scrap, rework, warranty/returns, expediting)

· Top 3 cost drivers

C) Capability (Cpk)

· CTQ list with Cpk

· Worst CTQ highlighted

· Notes on stability

D) Customer Signal (NPS)

· NPS score + response count

· Top themes (3-5)

· Key segment callouts

E) Baseline Integrity

· Date range

· Scope statement

· Data owners

· Definition version (v1.0)

Common Pitfalls (and How to Avoid Them)

· Pitfall: "We improved!" (but you changed defect criteria) Fix: Freeze definitions; version changes transparently.

· Pitfall: COPQ "savings" are theoretical Fix: Tie COPQ to actual financial lines or documented estimates.

· Pitfall: Cpk looks fine because you cherry-picked stable days Fix: Use a representative baseline window; show variation.

· Pitfall: NPS improves because you surveyed only happy customers Fix: Standardize sampling rules and track response counts.

· Pitfall: Metrics fight each other (lower defects but slower delivery) Fix: Pair baseline with a small set of balancing measures (cycle time, on-time delivery) in your internal dashboard.

Fast Next Step (How to Use This Baseline to Evaluate TQM)

Once your baseline is built, evaluation becomes straightforward:

1. Compare post-TQM performance to baseline using the same definitions

2. Report both mean change and variation change

3. Explain drivers with Pareto shifts (defect types/cost categories/themes)

4. Connect projects to metric movement (what changed, where, and why)

This is how TQM stops being "a program" and becomes measurable operational control.

Turn your TQM evaluation into visible wins

Baselines, scorecards, and audits only matter if they drive action. Our Continuous Improvement Certification (Online) gives you a step-by-step playbook to go from measurements to real reductions in defects, COPQ, and lead time across any process.

See the Continuous Improvement Certification